As mentioned in the video here: https://www.reddit.com/r/Hugston/ show proof of concept how is possible and compatible to work at home with big context models using every ai model, big or small, whatever architecture, so long is converted in GGUF.

Keypoints:

Keypoints:

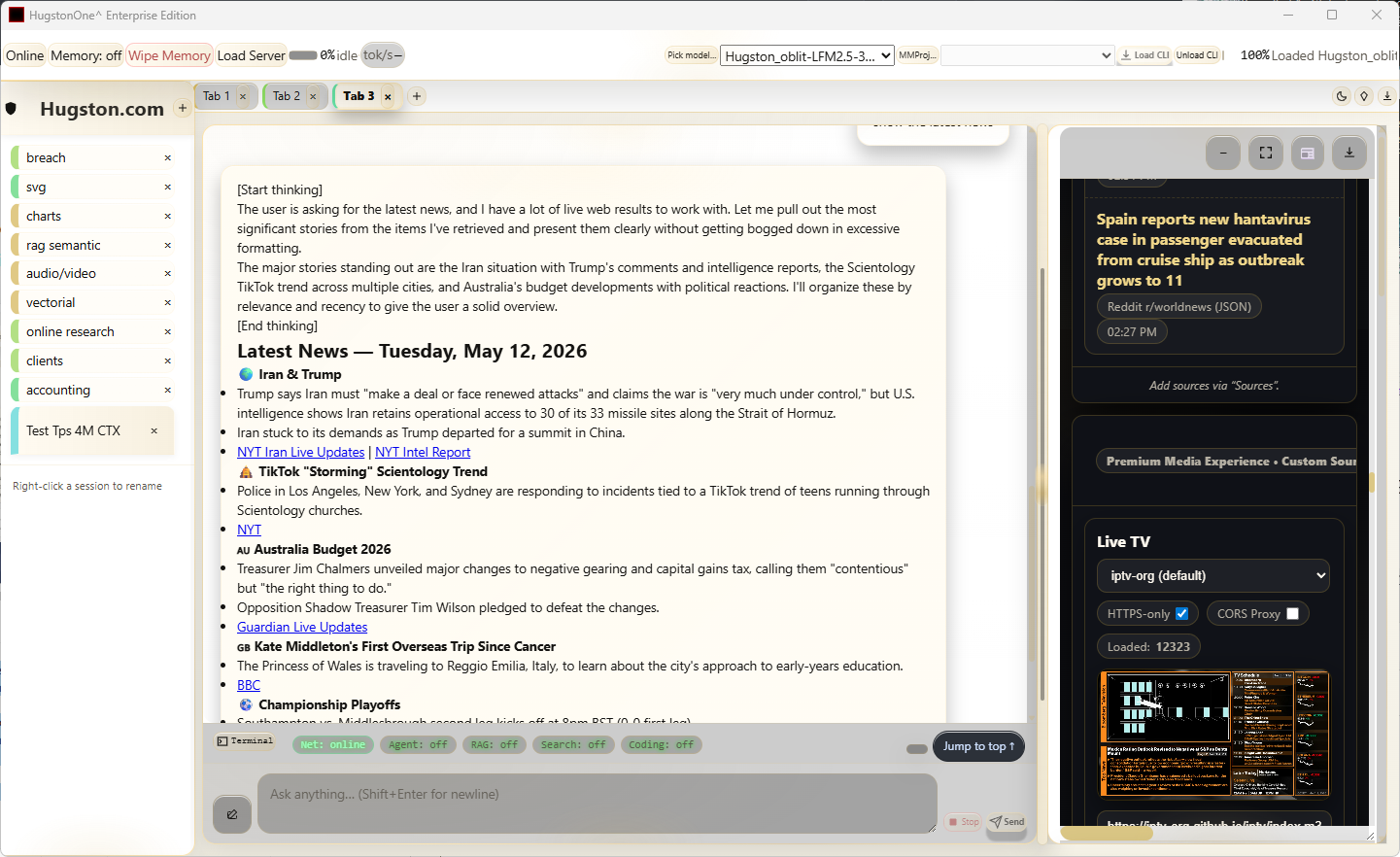

We testing tps of some models loaded at 4 million ctx, Lfm 350B, Qwen 0.8B, and a 35B-q4. Gpu+40-80gb ram.

The main utility is that:

-

1 It can RAG/process large docs, no ctx constrains up to 4 million ctx (more ctx to be tested).

-

2 No need for specific model (works with all models).

-

3 being in the same session keeping memory and thread.

Ofc loops depending on model quality and size but still useful.

Speed from lfm~80, Qwen 0.8B ~30, 35B ~6-12 tps.

We will be testing further by using the actual inference input/output to 1 million but loading the model 4million ctx, then we will push it further to 10 million. It can load and work in a consumer hardware. This will finally help us to understand hwo to optimize the cache and turbo or not and multi token prediction.

Today started tests in a very old Lenovo 4gb ram computer. LFM is promising, in rag and vision. It works alla Grande at acceptable tps speed.

We have already developed an enterprise prototype that works very fast in consumer hardware with large amounts of data.

At this point the next goal is to make possible the 10 million ctx in 128 gb ram, (at least 35b model) which will allow Rag in range of many terabytes with vectorial and semantic methods in seconds.

Hopefully we will have some time soon to upgrade the community edition.

Hugston Team.

Comments